|

Hopefully this has simplified the basics of managing Scala/Java libraries on Synapse, but for more information and options you can see the official documentation. This approach to managing packages is what has worked best for me so far. If the pool is in use, check the box under Force new settings to restart and make new libraries available for your next Spark session. In the packages pane, add JAR or WHL files by choosing + Select from workspace packages.

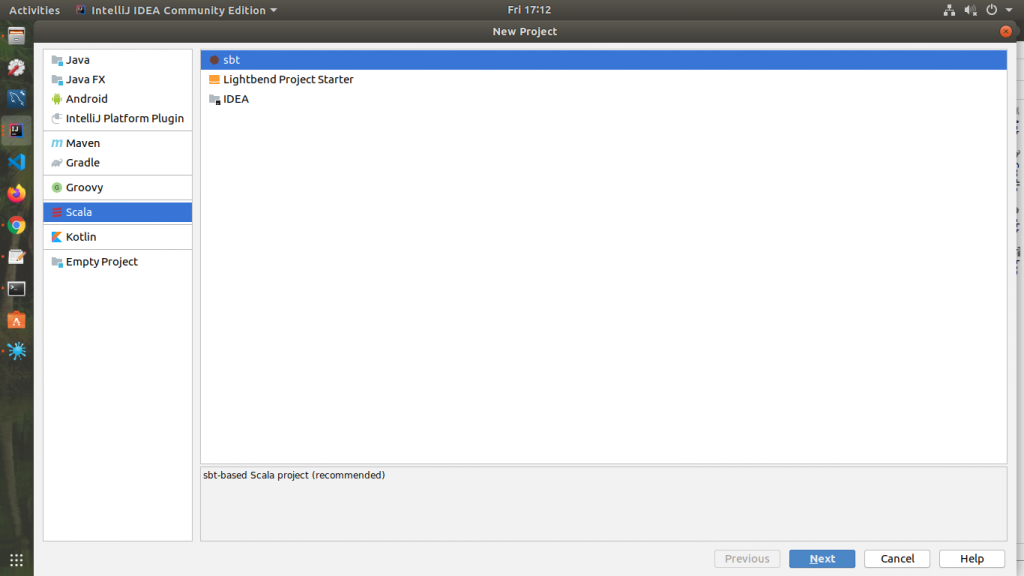

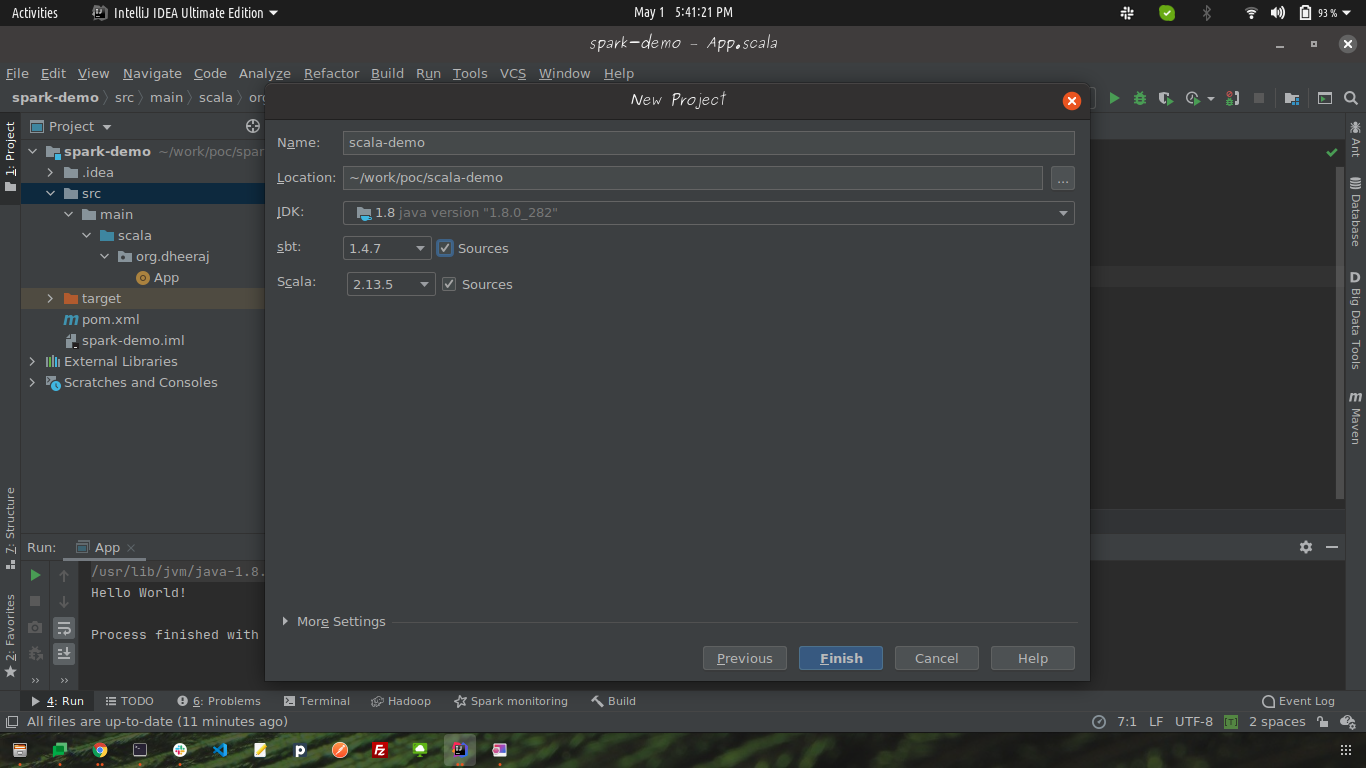

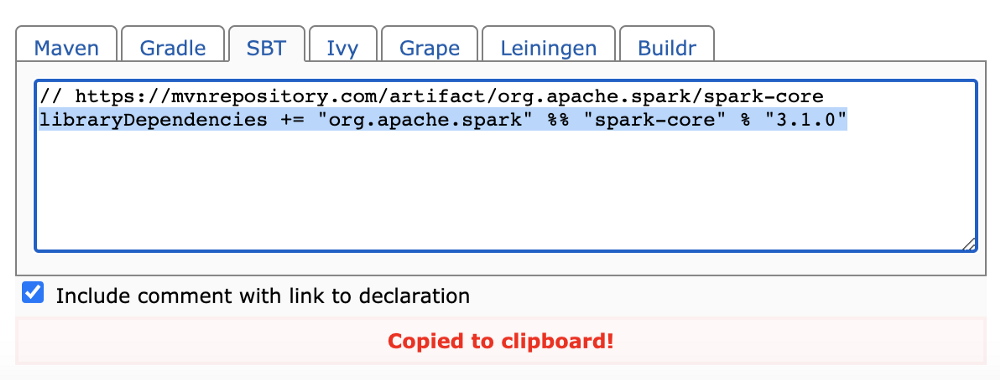

Import directly into DesignSpark (or other CAD tools) and save hours on design. Download the DesignSpark schematic symbol and PCB footprint for free. Find the pool then select Packages from the action menu. Search millions of DesignSpark libraries by part number or keyword. For Spark 2.3.1 version the Scala must be in 2.11.x minor version.I selected 2.11.8. SBT DOWNLOAD SPARK LIBRARIES HOW TOTo run using spark-submit locally, it is nice to setup Spark on Windows How to setup Spark Install 7z so that we can unzip and untar spark tar ball, from here Download spark 2.3 tar ball by going here. In the next window set the project name and choose correct Scala version. Next, select Apache Spark pools which pulls up a list of pools to manage. Why to setup Spark Before deploying on the cluster, it is good practice to test the script using spark-submit. You can add JAR files or Python Wheels files. In the Workspace Packages section, select Upload to add files from your computer. To add packages, navigate to the Manage Hub in Azure Synapse Studio. The most important consideration is that you find a recent version where the Scala version is the same and the Spark version matches (when it’s an external Spark library). Sbt The burning desire to have a simple deploy procedure. The goal is simple: Create a ber JAR of your project with all of its dependencies. The video I posted talks a bit more about how to find the right version. you want to download data from MySQL, do something about it and save it elsewhere), you can attach the jars manually ( jars) or download them from the maven repository ( packages). sbt-assembly is a sbt plugin originally ported from codahale's assembly-sbt, which I'm guessing was inspired by Maven's assembly plugin. To resolve missing dependencies you have to download those JARs and add to your workspace also. However, the library may have additional dependencies that are not included. My preference is to search for the required package on then download the prebuilt JAR file. If you are new to packaging up JAR files that is beyond the scope of this article, but I recommend searching for how to build a “fat jar” so it will include all the dependencies (be sure to mark Spark libraries as provided if they are part of your dependencies).įor open source libraries you may download the correct JAR from a public repository or build the JAR yourself from the source code. To see more details of what is installed on your pool you can checkout the runtime documentation pages for Spark 3.1 and Spark 2.4. Currently for a Spark 3.1 pool you should use Scala 2.12 and for a Spark 2.4 pool use Scala 2.11. SBT DOWNLOAD SPARK LIBRARIES UPDATESudo add-apt-repository ppa:webupd8team/jav sudo apt update sudo apt install oracle-java8-installer. This is a bit out the scope of this note, but Let me cover few things. When creating custom Scala libraries be sure that the Scala version matches what your Spark pool has installed. First, you must have R and java installed. In this screenshots for this post I use some dependencies for running Apache Kafka on a Synapse Apache Spark 3.1 pool, but many libraries are available to add.

SBT DOWNLOAD SPARK LIBRARIES CODEThese JAR files could be either third party code or custom built libraries. I recommend using the Workspace packages feature to add JAR files and extend what your Synapse Spark pools can do. We can use SBT to change the spark-daria namespace for all the code that’s used by spark-pika.

In this example, users are forced to use spark-daria version 2.3.10.24.0. There are a variety of ways to add extra JAR files to the set of known libraries on your Spark cluster, but with Synapse Spark pools the options are a little different than a standard Apache Spark installation. It prevents users from accessing a different spark-daria version than what’s specified in the spark-pika build.sbt file. External libraries for JVM will be packaged as JAR files. I simply took this libraryDependencies online so I am not sure which versions, etc.

The Spark source code has a whole directory of external Spark modules ( ) that can be added in but are not installed on every Spark environment by default. I am trying to download spark-core, spark-streaming, twitter4j, and spark-streaming-twitter in the build.sbt file below: libraryDependencies + '' 'spark-streaming-twitter2.10' '0.9.0-incubating'. Installing JAR files (for Java/Scala libraries)Īdding additional libraries to the Java Virtual Machine (Java/Scala code) is a common way to get additional functionality available in Apache Spark. DATE : 2018.10.15 NUMBER : R2R-7200 SiZE : 500.

0 Comments

Leave a Reply. |

AuthorYolanda ArchivesCategories |

RSS Feed

RSS Feed